Once upon a time, I stumbled into a forum thread about image censorship. The forum was made up of clipped images of funny Facebook posts, and at the time people were beginning to realize that you can’t just post names online willy-nilly. Censoring out the names attached to the posts was a requirement, and there were many ways to do it, but some of them could be undone.

What is Censoring, or Redacting?

That’s questionable. Merriam Webster gives a run-around definition, where censorship is the act of censoring, and censoring is the work of a censor, so I’m having to shave off the little bits of definition I got from each of those steps to make a cohesive definition here. Censorship is the act of keeping need-to-know info out of the hands of people who don’t need-to-know. This information could be moral (censoring swears out of public TV shows) private (people’s faces) or some other sort (no free branding). This isn’t a perfect definition, but it’s enough for this limited article.

The same goes for redaction, but with a little more intensity – need-to-know info has to be shared, but it could put people or property in danger. The easiest way to share that info without putting people in danger is to make them anonymous. By my own example here, redaction is the act of cutting out specifics (and anonymizing people) so the information can be shared.

People can guess – Tom Clancy is infamous for connecting dots to write what-if stories about redacted info – but the info is more or less anonymous to the general public.

Pixelate

Many choose pixelating over other methods of image redaction because it’s less harsh to the rest of the image, and destroys more than most kinds of “smooth” blurring. A lot of people can still make out what brand of soda a pixelated can is, and context will usually tell people that an obscene gesture is what’s behind the boxes on a TV show, but in general it works pretty well to get rid of the finer details that could identify somebody. More or less.

As machines get better and better at identifying patterns and finding the stop sign in Captchas, the human face is easier and easier to recreate. Gizmodo has an article on the subject here, and it’s a good demonstration of why – when the info is really important – it shouldn’t be used. Picture this: you have a 10,000-piece puzzle, most of it is one color, and you don’t have a box to look at. You do your best, but end up with a blob. This is early computers trying to un-pixelate an image.

It was great! It was very difficult to decipher who a protected witness was.

Then, further down the line, you get the box, and a set of glasses that lets you distinguish colors better – turns out that one color from before is actually like 30! So you get to work piecing it together. The box is blurry, so that’s a bummer, but other people with a completed puzzle can show you theirs. And someone posts to your database/puzzle forum an image very similar to the parts of the puzzle you’ve already completed. Suddenly you’re able to finish decoding the image for what it is: a human face.

That’s where we’re at right now. Pixelating the face of someone in the background of a TV show likely won’t lead to anybody going through all this effort to find them, but it could turn into a problem for folks being pixelated out of compromising images, court hearings, interviews, etc. where it’s very important that they aren’t found.

Text is even easier: picture the scenario above, but you know what letters are, there’s only two or three colors even with the glasses, and the puzzle’s only about 500 pieces. Don’t. Pixelate. Text. There’s a reason that governments go the permanent marker route. This article here does a great job of describing the undoing process.

Blur

Blur is very similar to pixelating, in a lot of ways. The pieces to the puzzle are much smaller, but you should begin to see a pattern with algorithmic censoring: once somebody knows how to do it, it can be undone. Fortunately, most people using it for important things know to go so hard on the blur factor that the image could have been a lot of things (or people), and poorly written AI can confuse matters further. Algorithms to undo blur aren’t perfect, so creating a face out of nothing doesn’t mean it’s the right face.

Source: The Verge

Take this image, for example. It’s blurry and pixel-y, but still clearly former US president Barack Obama. In a perfect world, databases would have perfect access to the entire population, but they don’t. They have access to what the researchers and engineers feed them. If your goal is to keep people from discovering someone’s identity, but you don’t want to slap a blackout square on their face, blurring is still a choice. Just make sure it’s too blurry for both people and machines to make out. Obama in this image has not been blurred nearly enough to thwart human eyes, even though the machine can’t figure it out. As a side note, this is a great example of why facial recognition technology is too immature to use right now!

Black Out (And Sticker) Redaction

From Sci Fi shows to taxes, redacted documents pop up frequently. Completely covering text in a document with black ink or unremovable black squares should completely destroy data. It’s a government favorite for that reason! As long as it’s done right, the info is lost.

The problem is doing it right.

The American Bar Association has a blog post on the matter here. A failure to completely redact information digitally led to the case falling apart. Separately, the US government got into some hot water with the Italian government a while back over a document with information in it they were not supposed to see, including names of officials and checkpoint protocols relating to an Italian operative’s death in Baghdad.

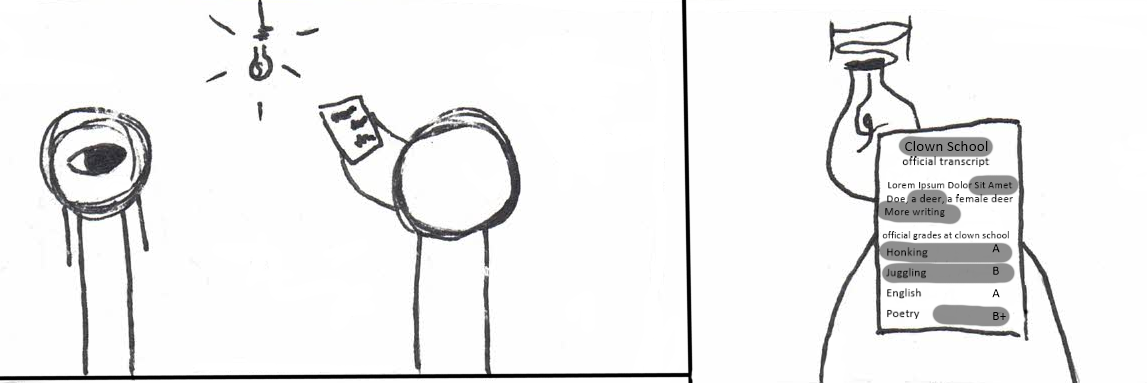

The Stickers

In less serious stakes, digital stickers can be imperfect depending on the app used to place them on the document, but that’s more of a .png problem than a problem with sticker apps. Since these are mostly used to post funny exchanges online, rather than conceal government secrets, bulletproof security is normally not necessary. As such, you should treat them that way: security is not their main goal. Don’t use them for tax forms.

Additionally, printing the page, marker-ing over info to redact it, and then scanning it back in is an option if you truly don’t trust digital apps to completely destroy the data. It’s tedious, it’s annoying, and it requires a scanner, but it’s an option. This is also not infallible, because even in real life things can look opaque when they aren’t.

Source: Kaspersky Labs

Kaspersky made this image with a digital marker, not an ink one, but it’s still a good demo. Use something marketed for redacting, not just some Crayola water-soluble marker.

Side Note: Government and Redaction Programs

Sometimes art programs store images in layers. Sometimes checking a PDF for redactions means making the redactions not permanent until publish. With these two problems in mind, mistakes like not merging layers, or using a program that doesn’t actually remove the text (as in, you can still copy it from behind the box) are somewhat understandable. That doesn’t mean it’s not a huge mistake.

A major program flaw leaked government secrets. Users could simply copy the text behind the box, like it wasn’t even there. Why would you ever leave the text intact when that’s exactly the opposite of what it was advertised for? It wasn’t an isolated incident, either, as you can see mentioned above with the ABA and the Italian case. Other ways to unsuccessfully redact include putting a vector of a black box over the information in Word and cropping the image in an Office program. The entire picture’s still there, it’s just hidden, not destroyed. Don’t do that.

Swirl Redaction

Swirl is the worst of all of these options unless the others are executed very poorly. Besides being the ugliest option, it doesn’t do a good job at destroying information that other computers could use. Another algorithm doesn’t need to make assumptions like it would for pixelating. All of the information is still there, just stored in the shape of a crescent. That’s it. The algorithm stretches the image, and then warps it around a central axis, but everything is still there. See the side note below on the Swirl Man who assumed he’d done a good enough job of redacting his face. Now that this cure for swirling is out there, it’s basically obsolete. Don’t swirl!

Side Note: They Caught The Guy

A while back, police caught a child trafficker. He only hid his identity by swirling his face. Swirling, like any other computer effect, uses an algorithm. Algorithms follow rules.There’s a clear pattern in the swirling that can be undone to retrieve the original image. Simply knowing what tool he’d used was enough to reverse-engineer it and undo the face swirling. He was caught, thankfully, as a result of his own hubris. Here’s the Wikipedia article on his case and capture.

Sources: https://www.makeuseof.com/tag/easily-pixelate-blur-images-online/

https://stackoverflow.com/questions/4047031/help-with-the-theory-behind-a-pixelate-algorithm

https://en.wikipedia.org/wiki/Pixelation

https://gizmodo.com/researchers-have-created-a-tool-that-can-perfectly-depi-1844051752

http://news.bbc.co.uk/2/hi/europe/4504589.stm

https://vowe.net/archives/005838.html

http://www.cs.cornell.edu/~shmat/shmat_imgobfuscation.pdf

https://help.adobe.com/archive/en_US/acrobat/8/professional/acrobat_8_help.pdf

https://talkingpdf.org/redacting-with-acrobat-8-professional-vs-redax/